Choosing the right engineering management platform is more critical than ever in 2026. This guide reviews the best alternatives to Jellyfish for engineering leaders, managers, and decision-makers who are seeking to optimize team performance, align engineering with business goals, and adapt to the rapidly evolving landscape of AI, developer experience, and workflow automation. With the increasing importance of actionable insights, real-time data, and seamless integration, selecting the right platform can directly impact your organization’s ability to deliver value, improve productivity, and stay competitive.

Jellyfish built its reputation as the pioneer engineering management platform. Since launching in 2017, the company has raised $114.5 million in funding from Accel, Insight Partners, and Tiger Global. Its core strength is aligning engineering work with business objectives, giving CFOs and VPs of Engineering a shared language around investment allocation, resource planning, engineering effort, and resource allocation—key aspects tracked by such platforms for effective capacity planning and workload management.

That positioning served enterprise buyers well for years. But the engineering intelligence category has shifted. AI coding tools are now standard across most teams. The 2025 DORA Report introduced new measurement frameworks. Developer experience has become a board-level priority. Today, aligning engineering activities with business goals and supporting business teams with actionable insights is critical for organizations seeking to optimize outcomes.

Platforms must now provide seamless integration with existing tools to enable real-time data aggregation, supporting new measurement frameworks and the growing focus on developer experience.

The question is no longer “Where is my engineering time going?” It is “How do I measure the real impact of AI tools on delivery, code quality, and developer experience, all at once?” Making data-driven decisions is now essential for evaluating the impact of engineering investments and driving continuous improvement.

If you are evaluating Jellyfish alternatives, you are likely dealing with one or more of these friction points.

Jellyfish is a capable platform for enterprise engineering management. It does several things well: investment allocation, capacity planning, R&D cost reporting, and executive dashboards. For organizations with deep Jira workflows and clean data hygiene, it provides solid visibility into where engineering time goes. Jellyfish focuses on quantitative metrics and tracking key metrics, offering dashboards and reports that help monitor team performance, but it may lack qualitative and tailored insights that provide a more complete understanding of engineering productivity.

But several patterns consistently push teams toward alternatives.

Pricing requires a sales conversation. Jellyfish does not publish pricing publicly. According to Vendr’s 2026 analysis, buyers with 50–150 engineering seats on annual contracts often see pricing in the range of $50,000–$120,000 per year. For mid-market teams with 50–200 engineers, that is a significant commitment before you have even seen the platform in action.

Setup and onboarding take time. Multiple competitor analyses and user reviews on G2 note that Jellyfish has a steep learning curve. Users report that training is vital to use the product well, and initial configuration can take weeks. Several G2 reviewers cite complex setup and the need for dedicated staff to manage the platform.

Heavy Jira dependency. Jellyfish treats Jira as its primary system of record. For teams using Linear, GitHub Issues, or hybrid setups, this Jira-centricity can become a constraint. As Faros AI’s analysis points out, Jellyfish’s approach can undercount engineering activities not tied to Jira issues. When only quantitative data is considered, technical debt and workflow bottlenecks can be overlooked, impacting long-term engineering efficiency.

Limited AI coding impact measurement until recently. Jellyfish launched AI Impact tracking in late 2024 and has expanded it since. However, many teams evaluating alternatives report needing deeper AI measurement: not just adoption tracking (who is using Copilot or Cursor), but actual impact on cycle time, code quality, and PR outcomes across AI-assisted versus non-AI PRs.

No automated code review. Jellyfish does not include a code review agent. If you want AI-powered code review alongside your engineering analytics, you need a separate tool or a platform that bundles both.

Customization gaps. G2 reviews consistently flag limited customization as a friction point. Of the top complaint themes, 21 mentions specifically call out lack of custom reporting flexibility, and 19 mentions note limited features and integration depth. Teams increasingly need comprehensive code insights and tailored insights to better understand team performance and address unique workflow challenges.

Many software teams struggle with the limitations of purely quantitative data, realizing it doesn't tell them how to improve or what's happening outside of platforms like Git and Jira, which can hinder actionable progress and slow development speed.

Before comparing specific platforms, it helps to know what separates a useful engineering intelligence tool from one that creates more dashboard fatigue. Here is what matters in 2026. Seamless integration with existing tools and customizable dashboards is essential for capturing real time data, enabling actionable insights, and supporting better decision-making across engineering teams.

Effective AI-powered workflow optimization requires tools that provide clear, actionable insights to highlight bottlenecks in the development process and offer specific recommendations for data-driven improvements. Seamless integration with existing tools is crucial for automatic data capture and improved decision-making.

Alternatives to Jellyfish, such as DX, LinearB, Swarmia, Haystack, Waydev, and Pluralsight Flow, address the main limitations of Jellyfish by offering a more comprehensive approach to engineering management. These platforms combine both qualitative and quantitative insights, allowing teams to track performance and identify bottlenecks more effectively. Platforms like Swarmia and Pluralsight Flow provide engineering teams with tools that focus on team dynamics and workflow optimization, which can be more beneficial than Jellyfish's top-down reporting approach. Jellyfish is often criticized for its limited customization and lack of focus on developer experience, which has led many teams to seek alternatives that offer better insights into daily challenges faced by developers. Additionally, alternatives like Haystack and Waydev emphasize real-time insights and proactive identification of bottlenecks, enhancing team productivity compared to Jellyfish's more rigid reporting structure.

When evaluating alternatives to Jellyfish, it's important to consider how some platforms position themselves as engineering effectiveness platforms—offering not just analytics, but comprehensive solutions for operational efficiency, code quality, and developer productivity. The table below compares seven alternatives across the capabilities that matter most for engineering leaders in 2026.

Notably, alternatives to Jellyfish such as DX and Typo AI combine both qualitative and quantitative insights, enabling teams to track performance and identify bottlenecks more effectively than platforms focused solely on high-level metrics.

Typo AI is an engineering effectiveness platform that combines SDLC visibility, AI coding tool impact measurement, automated AI code reviews, and developer experience surveys in a single product. It provides comprehensive code insights and tracks DORA and SPACE metrics to help teams optimize productivity, software health, and operational efficiency. Typo connects to GitHub, GitLab, Bitbucket, Jira, Linear, and CI/CD pipelines.

Where Typo differs from Jellyfish is scope and speed. Jellyfish focuses primarily on engineering-to-business alignment, investment allocation, and financial reporting. Typo starts from how work actually moves through the SDLC and layers in AI impact, code quality, and developer experience on top of that foundation, reflecting its broader mission to redefine engineering intelligence.

Key strengths:

Customer proof points:

Typo is a G2 Leader with 150+ reviews, trusted by 1,000+ engineering teams, and featured in Gartner’s Market Guide for Software Engineering Intelligence Platforms.

Best for: Engineering teams at mid-market SaaS companies (50–500 engineers) who need unified visibility across delivery, AI impact, code quality, and developer experience, without the enterprise pricing or multi-week onboarding—and who are evaluating why companies choose Typo for this use case.

LinearB focuses on engineering workflow automation, DORA metrics, and cycle time analytics. As an engineering analytics tool, it provides instant insights and workflow automation, delivering automated improvement actions like PR bots and alerts that help teams enforce working agreements around PR size, review turnaround, and merge frequency.

Where LinearB stands out: It is actionable at the team level. Instead of high-level allocation reporting, LinearB provides specific bottleneck identification, tracks key engineering metrics, and offers automated fixes. The free tier is generous for small teams, making it an accessible starting point.

Where it falls short versus Jellyfish alternatives: LinearB does not include native AI coding impact measurement. It does not offer automated code review. And it lacks DevEx survey capabilities. Teams who need to measure how AI tools affect delivery or who want code review bundled into their analytics platform will need to look elsewhere.

Best for: Engineering managers focused on process efficiency who want automated interventions in their development pipeline, particularly those starting with a free-tier budget. Alternatives like Haystack and Waydev emphasize real-time insights and proactive identification of bottlenecks, enhancing team productivity.

Swarmia combines DORA metrics with developer experience signals. It tracks cycle time, deployment frequency, and review throughput alongside “working agreements” that let teams set norms like PR size limits and review turnaround expectations. Swarmia helps development teams and software development teams track quality metrics and improve team's productivity by providing actionable insights and real-time analytics.

Where Swarmia stands out: Clean UX. Team-first approach. Positions itself explicitly as the “humane alternative to engineering surveillance,” which resonates with engineering leaders who care about developer experience as much as process metrics. The company raised €10 million in June 2025, signaling continued growth.

Where it falls short: No automated code review. Limited AI coding impact measurement. Limited customization for complex enterprise needs. G2 and Reddit discussions consistently cite feature depth as the primary gap compared to more comprehensive platforms.

Best for: Teams starting their metrics journey who want clean dashboards and team-first norms without enterprise complexity.

DX (formerly GetDX) is an engineering intelligence platform founded by the researchers who created the DORA, SPACE, and DevEx frameworks. It combines structured developer surveys with system metrics through its Data Cloud product to measure developer experience, productivity friction, and organizational health, while also measuring developer productivity and individual performance metrics. DX incorporates developer feedback as a qualitative element, ensuring that both quantitative data and direct input from developers are used to identify issues and suggest improvements.

Where DX stands out: Deep research pedigree. The DX Core 4 framework is becoming a standard reference in the DevEx space. No other tool has the same academic backing for its measurement methodology. DX leverages the SPACE framework (Satisfaction, Performance, Activity, Communication, Efficiency) to provide a holistic view of developer experience and productivity.

Where it falls short: DX is primarily a survey and sentiment platform. It incorporates self reported metrics from developers, which complements system-generated data, but does not include automated code review, native AI coding impact measurement, or deep SDLC analytics. If you need to see how work moves through your pipeline, diagnose PR bottlenecks, or track AI tool impact on cycle time, DX will not cover those use cases alone.

Best for: Organizations investing heavily in developer experience improvement and platform engineering who want the most rigorous DevEx measurement methodology available.

Haystack is a lightweight engineering analytics platform focused on DORA metrics, delivery visibility, and team health. It targets teams that want engineering metrics without the complexity of enterprise platforms, while providing real time data and real time visibility for distributed teams and software teams.

Where Haystack stands out: Quick setup, transparent pricing, and a focused feature set that does not overwhelm smaller teams. For engineering managers overseeing 5–50 developers who need basic delivery visibility, Haystack provides fast time-to-value.

Where it falls short: No AI coding impact measurement. No automated code review. No DevEx surveys. As teams scale past 50–100 engineers or need to measure AI tool ROI, Haystack’s feature set may not keep pace.

Best for: Small-to-mid engineering teams (under 50 developers) who want straightforward delivery metrics without enterprise complexity or pricing.

Waydev is an engineering intelligence platform that uses DORA metrics, the SPACE framework, developer experience insights, and AI capabilities to provide delivery analytics. It automatically tracks work from Git activity, visualizes project timelines, and includes basic code review workflow features, while also offering detailed analytics on pull requests and the entire development lifecycle.

Where Waydev stands out: Automated work logs from Git activity eliminate manual entry. Project timeline visualization gives clear progress views. Sprint planning integration supports agile workflows. Waydev also supports the software delivery process by providing engineering insights that help teams optimize performance and identify bottlenecks.

Where it falls short: AI coding impact measurement is limited. Code review capabilities are basic compared to dedicated AI code review tools. DevEx survey depth does not match platforms like DX or Typo.

Best for: Teams that want Git-level activity analytics with automated work tracking and sprint planning support.

Pluralsight Flow (formerly GitPrime) tracks coding activity: commits, lines of code, code churn, and review patterns. It was acquired by Appfire from Pluralsight in February 2025 and now operates as a standalone product within the Appfire ecosystem alongside BigPicture PPM and 7pace Timetracker. Flow also enables tracking of individual performance and individual performance metrics, providing visibility into productivity metrics and key metrics for both teams and developers—similar to platforms like Code Climate Velocity.

Where Flow stands out: Mature Git activity analytics with ML-powered insights. The Appfire ecosystem positions it alongside project management tools. For organizations already invested in Pluralsight for developer training, Flow provides natural synergy.

Where it falls short: No AI coding impact measurement. No automated code review. No DevEx surveys. No manager productivity agents. The platform focuses on Git-level patterns rather than full SDLC visibility, and it does not cover sprint analytics, deployment metrics, or incident tracking.

Best for: Large organizations that want mature Git analytics and are already invested in the Appfire or Pluralsight ecosystem.

The right platform depends on what gap Jellyfish is not filling for your team. Here is a framework for making the decision. For engineering organizations, leveraging data-driven approaches and data-driven insights is essential—these enable leaders to make informed decisions, optimize workflows, and align engineering efforts with strategic goals.

If your primary need is measuring AI coding tool impact: Typo is the strongest option for improving developer productivity with AI intelligence. It natively tracks GitHub Copilot, Cursor, Claude Code, and CodeWhisperer, and compares AI-assisted versus non-AI PR outcomes on cycle time, quality, and developer experience. Jellyfish added AI Impact tracking recently, but Typo’s approach measures verified impact, not just adoption.

If you need automated code review bundled with analytics: Typo is the only platform on this list that includes a context-aware AI code review agent alongside SDLC analytics, AI impact measurement, and DevEx surveys. Every other alternative requires a separate code review tool. Typo also supports engineering productivity and team efficiency by surfacing actionable metrics and workflow bottlenecks.

If your primary need is developer experience measurement: DX offers the deepest research-backed methodology. Typo offers DevEx surveys combined with delivery analytics and AI impact in one platform. The tradeoff is depth of DevEx research (DX) versus breadth of the platform (Typo).

If budget is your primary constraint: LinearB’s free tier or Swarmia’s transparent pricing provide accessible starting points. Typo also offers flexible plans and a self-serve free trial with no sales call required.

If you need enterprise finance alignment: Jellyfish may still be the right choice. Its investment allocation, R&D capitalization, and DevFinOps features are designed for CFO-level conversations. Jellyfish stands out for tracking engineering investments and aligning them with business outcomes. No alternative on this list matches Jellyfish’s depth in financial engineering reporting.

The 2025 DORA Report found that 90% of developers now use AI coding tools. But the report also found that AI amplifies existing practices rather than fixing broken ones. Teams with poor DORA baselines do not improve with AI. They accelerate their dysfunction.

This creates a measurement problem. Most organizations track AI tool adoption through license counts. They know how many seats are active. They do not know whether those tools are actually improving delivery speed, code quality, or developer experience. Tracking engineering effort and resource allocation is essential for understanding the true impact of AI tools, as it reveals how team resources are distributed and whether productivity gains are realized.

That gap is why AI coding impact measurement has become the defining capability in the engineering intelligence category. It is not enough to know that 80% of your team uses Copilot. You need to know whether AI-assisted PRs merge faster, introduce more rework, or create code quality issues that show up downstream—while also optimizing the development process and engineering processes for improved developer productivity and addressing technical debt.

Platforms that can answer that question, with verified data from your actual engineering workflow, are the ones worth evaluating.

Jellyfish built a strong foundation in the engineering management space. For enterprise teams that need deep investment allocation, R&D capitalization, and finance alignment, it remains a capable option.

But the category has evolved. AI coding tools have changed what engineering leaders need to measure. Developer experience has become a board-level priority. The importance of software delivery, team collaboration, and operational efficiency has grown as organizations seek platforms that optimize the entire development lifecycle. And the bar for setup speed and pricing transparency has risen.

If you are looking for a platform that covers SDLC visibility, AI coding impact measurement, automated code reviews, and developer experience in a single product, with a setup that takes 60 seconds instead of 60 days, Typo is worth evaluating.

What is Jellyfish used for?

Jellyfish is an engineering management platform that aligns engineering work with business objectives. It provides visibility into investment allocation, resource planning, R&D capitalization, and delivery metrics. It integrates with Jira, GitHub, GitLab, and other development tools.

How much does Jellyfish cost?

Jellyfish does not publish pricing publicly. Based on Vendr’s 2026 market data, annual contracts for 50–150 engineering seats typically range from $50,000 to $120,000, depending on modules, integrations, and contract terms.

What are the main limitations of Jellyfish?

Common friction points reported by users include: steep learning curve and complex initial setup, heavy dependency on Jira data quality, no automated AI code review capability, limited custom reporting flexibility, lack of customizable dashboards, limited tracking of quality metrics, and opaque pricing that requires a sales conversation.

Does Jellyfish measure AI coding tool impact?

Jellyfish added AI Impact tracking in late 2024, which measures AI tool adoption and usage across coding assistants like GitHub Copilot and Cursor. However, other engineering analytics tools provide more granular analysis of pull requests and quality metrics, enabling deeper AI-vs-non-AI PR comparison at the delivery impact level. Platforms like Typo provide more granular measurement.

What is the best Jellyfish alternative for mid-market teams?

For mid-market engineering teams (50–500 engineers) that need unified SDLC visibility, AI coding impact measurement, automated code review, and DevEx surveys in a single platform, Typo offers the most comprehensive coverage with the fastest setup (60 seconds) and self-serve pricing.

AI impact on DORA metrics reveals a striking productivity paradox: individual developers merged 98% more pull requests while organizational software delivery performance remained essentially flat. The 2025 DORA Report—retitled “State of AI-assisted Software Development”—surveyed nearly 5,000 technology professionals and uncovered that AI tools amplify existing team capabilities rather than universally improving delivery metrics.

This article covers the 2025 DORA Report findings, the seven team archetypes that replaced traditional performance tiers, and practical measurement strategies for engineering leaders navigating AI adoption. The target audience includes VPs and Directors of Engineering responsible for measuring AI tool ROI, deployment frequency improvements, and overall engineering performance. Understanding why AI benefits vary so dramatically across teams has become essential for any organization investing in AI coding assistants.

Direct answer: AI acts as an amplifier that magnifies whatever work practices, cultural health, and platform maturity already exist in an organization. Strong teams see gains; teams with foundational challenges see their dysfunction worsen. This means engineering leaders must fix DORA metric baselines before expecting AI investment to deliver meaningful improvement.

By the end of this article, you will understand:

The 2025 DORA Report introduced a critical framing: AI acts as an “amplifier” or “multiplier” rather than a universal productivity booster. According to DevOps research conducted by Google Cloud, organizations with strong engineering systems, healthy data ecosystems, and mature internal platforms see positive gains from AI adoption. Organizations with weak foundations see those weaknesses magnified—higher change failure rate, more production failures, and increased rework.

AI adoption among software professionals surged to approximately 90% in 2025, up from roughly 75% the previous year. Most professionals now use AI tools daily, with median usage around two hours per day. Over 80% report improved individual productivity, and roughly 59% report improved code quality. Yet these perception-based gains don’t translate uniformly to organizational performance—the core insight that defines the AI era for engineering teams.

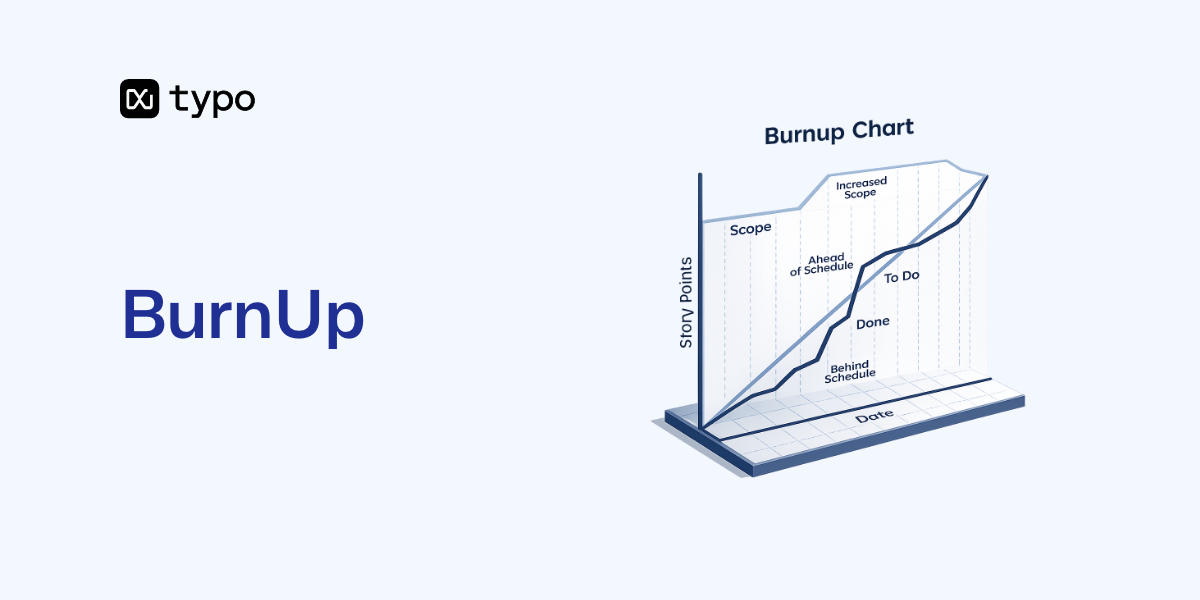

The DORA framework historically tracked four core metrics—Change Lead Time, Deployment Frequency, Change Failure Rate, and Mean Time to Recovery—as the foundation for measuring software delivery performance. These four metrics were used to categorize teams into different performance levels and benchmark improvement areas. In 2024, the DORA framework evolved to include five metrics, adding Deployment Rework Rate and removing the elite/high/medium/low performance tiers that defined earlier reports.

Throughput metrics now include:

Instability metrics include:

The addition of Rework Rate acknowledges that failures aren’t always outright rollbacks. Many disruptions are remediated via additional fixes, and tracking this provides a more complete picture of delivery stability. New metrics added to the DORA framework include Deployment Rework Rate and measures of AI Code Share, Code Durability, and Complexity-Adjusted Throughput.

Deployment Rework Rate measures the frequency of unplanned deployments required due to production issues.

AI Code Share tracks the proportion of code generated by AI tools.

Code Durability assesses how long code survives without major rework.

Complexity-Adjusted Throughput accounts for the complexity of changes when measuring delivery speed.

This evolution directly addresses AI-era challenges where AI-generated code may increase deployment volume while simultaneously creating quality assurance burdens downstream. Lead Time for Changes can drop initially as AI accelerates code writing, but bottlenecks may shift to code review, increasing the review time significantly. Tracking code that survives without major rework over time is also important for understanding long-term stability.

Research shows that platform engineering stands out as the primary enabler of successful AI adoption. Approximately 90% of organizations have adopted at least one internal developer platform, and 76% have dedicated platform teams. High-quality internal platforms correlate strongly with AI amplification benefits—teams can move faster because CI/CD pipelines, monitoring, version control practices, and developer experience infrastructure absorb the increased code velocity AI enables, especially when they already understand the importance of DORA metrics for boosting tech team performance.

Without strong platforms, AI tools’ output creates chaos. More committed code flowing through immature pipelines leads to bottlenecks in code review, longer queues, and ultimately more deployments fail. The DORA AI capabilities model emphasizes that platform prerequisites must exist before AI adoption can translate individual developer productivity into organizational outcomes.

This connection between foundational capabilities and the productivity paradox explains why some high performing teams thrive with AI while others struggle.

The productivity paradox represents the most significant finding from 2025: individual developers produce dramatically more output, but engineering teams don’t see proportional improvements in delivery speed or business outcomes. Faros AI, analyzing telemetry from over 10,000 developers, quantified this gap with precision that survey data alone cannot provide, which underscores both the strengths and pros and cons of DORA metrics for continuous delivery.

At the individual level, AI assisted coding delivers measurable improvements:

Individual developers report that AI coding assistants help them code faster, produce better documentation, and move through routine tasks with less friction. These gains are real and substantial. The challenge is that individual productivity improvements don’t automatically flow through to organizational performance.

Despite the surge in individual output, Faros AI’s telemetry revealed that organizational delivery metrics—deployment frequency, lead time, and the ability to quickly restore service after incidents (recovery speed)—showed no noticeable improvement. The traditional DORA metrics remained essentially flat across their sample.

Worse, several quality and efficiency signals degraded:

This data reveals where AI benefits evaporate: somewhere between individual contribution and organizational delivery, bottlenecks absorb the productivity gains. The complete picture shows AI helps individual developers produce more, but without corresponding improvements in review processes, pipeline efficiency, and quality assurance, that output creates downstream burden rather than business outcomes and often surfaces as classic signs of declining DORA metrics.

The DORA AI capabilities model identifies seven foundational practices that determine whether AI adoption succeeds or fails at the organizational level:

Teams that score well on these seven capabilities convert AI adoption into real performance benefits. Teams lacking these foundations experience the amplifier effect negatively—AI magnifies their dysfunction rather than solving it.

The 2025 DORA Report replaced the traditional linear performance tiers (Elite, High, Medium, Low) with seven team archetypes. This shift reflects a more nuanced understanding that team performance is multidimensional—throughput matters, but so does instability, team health, valuable work time, friction, and burnout, which aligns with newer DORA metrics guides for engineering leaders that emphasize a broader view of performance.

The seven archetypes are built from multiple dimensions, which still rely on mastering core DORA metrics implementation:

Gene Kim and the DORA researchers developed this framework because teams with identical DORA metrics might have vastly different experiences and outcomes. A team deploying frequently with low failure rate but high burnout requires different interventions than one with the same metrics but healthy team dynamics.

Prioritize establishing basic CI/CD pipelines, test coverage, build quality, and simple rollback mechanisms. AI adoption before these foundations exist will amplify chaos.

Address technical debt, modularize monolithic systems, and create internal platforms to standardize processes. AI tools can help with code modernization, but platform investment must come first.

Identify process friction—reviews, decision bottlenecks, approval chains—and streamline or automate them. Adding AI-generated code to a team already drowning in review backlog makes things worse.

Guard against quality degradation by monitoring instability metrics closely. Success creates risk: as throughput increases, maintaining code quality and architecture discipline becomes harder.

Challenge: HR hierarchies define teams administratively, but actual collaboration patterns don’t match org charts. AI tool adoption may be high in one administrative group while the engineers actually working together span multiple groups.

Solution: Combine HR hierarchies with telemetry data to measure actual collaboration patterns. Track who reviews whose code, who co-authors changes, and where knowledge flows. This provides a more accurate picture of where AI adoption is actually impacting delivery.

Challenge: Developers move between teams, change roles, and contribute to multiple repositories. Attributing AI impact to specific teams or projects becomes unreliable.

Solution: Track AI-influenced code contributions across team boundaries with proper tooling. Engineering intelligence platforms like Typo can measure AI-influenced PR outcomes with verified data rather than relying on license adoption estimates or self-reported usage, which is critical when implementing DORA DevOps metrics in large organizations.

Challenge: Traditional DORA metrics don’t distinguish between AI generated code and human-written code. You can’t assess whether AI is helping or hurting without this visibility.

Solution: Layer AI adoption rate, acceptance rates, and quality impact on traditional DORA metrics. Track:

Challenge: AI productivity gains evaporate somewhere in the delivery pipeline, but without end-to-end visibility, you can’t identify where.

Solution: Implement Value Stream Management to track flow from ideation through commit, review, QA, deploy, and post-release monitoring. This stream management approach reveals where time or defects accumulate—often in review queues or integration testing phases that become bottlenecks when AI dramatically increases deployment frequency upstream, and it depends on accurately measuring DORA metrics across the pipeline.

The 2025 DORA Report confirms that AI amplifies existing team patterns rather than uniformly improving software delivery performance. Teams with strong DORA baselines, mature platforms, and healthy engineering cultures see AI benefits compound. Teams with foundational challenges see AI worsen their dysfunction. The productivity paradox—individual gains that don’t translate to organizational outcomes—will persist until engineering leaders address the bottlenecks between developer output and business value delivery.

Immediate actions for engineering leaders:

The window for action is approximately 12 months. Organizations that successfully integrate AI with strong DORA foundations will achieve meaningful improvement in delivery speed and quality. Those that add AI to broken systems will see competitive disadvantages compound as their instability metrics worsen while competitors pull ahead.

Related topics worth exploring: Value Stream Management for end-to-end visibility, DevEx measurement for understanding developer friction, and AI ROI frameworks that connect tool investment to business outcomes.

The 2025 DORA Report found that approximately 90% of developers now use AI tools, with over 80% reporting productivity gains at the individual level. The central finding is that AI acts as an amplifier—magnifying organizational strengths and weaknesses rather than uniformly improving performance.

The report introduced seven critical capabilities that determine whether AI benefits scale to organizational performance: governance clarity, healthy data ecosystems, AI-accessible internal data, strong version control practices, small-batch workflows, user-centric focus, and quality internal platforms.

Notably, DORA researchers found no correlation between AI adoption and increased developer burnout, possibly because developers feel more productive even when downstream organizational stress increases.

AI improves individual developer metrics but creates organizational delivery challenges. Teams with strong DORA baselines see amplified benefits; weak teams see amplified dysfunction.

Quality and stability signals often worsen despite throughput improvements. Faros AI telemetry showed bug rates increased approximately 9% and code review time increased 91% as AI-generated code volume overwhelmed review capacity.

Platform engineering maturity determines AI success more than tool adoption rates. Organizations with strong CI/CD pipelines, monitoring, and internal platforms convert AI productivity into delivery improvements. Organizations lacking these foundations see AI create more chaos.

Deployment frequency increases due to AI-generated code volume, but this may not reflect meaningful output. More deployments don’t automatically translate to faster value delivery if those deployments require rework or cause production incidents.

Lead time for changes reduces for individual contributions, but review bottlenecks increase as reviewers struggle to keep pace with higher code volume. The 91% increase in review time documented by Faros AI shows where individual lead time gains get absorbed.

Engineering leaders need to measure complexity-adjusted throughput rather than raw deployment counts. Failed deployment recovery time becomes a more critical metric than traditional MTTR because it captures the full cost of instability.

The seven team archetypes are: Harmonious High-Achievers, Pragmatic Performers, Stable and Methodical, Constrained by Process, Legacy Bottleneck, High Impact Low Cadence, and Foundational Challenges.

Each archetype requires different AI adoption strategies and measurement approaches. Multidimensional classification considers throughput, stability, team well-being, friction, and time spent on valuable work—not just the four traditional DORA metrics.

One-size-fits-all AI strategies fail because a Legacy Bottleneck team needs platform investment before AI adoption, while Constrained by Process teams need to streamline workflows first. Harmonious High-Achievers can adopt AI aggressively but must monitor quality degradation.

Engineering leaders should combine traditional DORA metrics with AI adoption rates and code quality indicators. This means tracking not just deployment frequency and lead time, but also AI-influenced PR outcomes, PR size trends, review time changes, and rework rate.

Track AI-influenced PR outcomes with verified data rather than license adoption estimates. Engineering intelligence platforms like Typo provide visibility into actual AI usage patterns and their correlation with delivery and quality outcomes, complementing high-level resources that keep DORA metrics explained with practical insights.

Implement Value Stream Management to identify where AI gains evaporate in the delivery pipeline. Often, review queues, integration testing, or deployment approval processes become bottlenecks that absorb individual productivity improvements before they translate to business outcomes.

Use engineering intelligence platforms to correlate AI usage with delivery metrics, quality signals, and developer experience indicators. This comprehensive measurement approach provides actionable insights that surface problems before they compound.

GitHub Copilot, Cursor, and Claude Code represent the three dominant paradigms in AI coding tools for 2026, each addressing fundamentally different engineering workflow needs. With 85% of developers now using AI tools regularly and engineering leaders actively comparing options in ChatGPT and Claude conversations, choosing the right ai coding assistant has become a strategic decision with measurable impact on delivery speed and code quality.

This guide covers performance benchmarks, pricing analysis, enterprise readiness, and measurable productivity impact specifically for engineering teams of 20-500 developers. It falls outside our scope to address hobbyist use cases or tools beyond these three leaders. The target audience is engineering managers, VPs of Engineering, and technical leads who need data-driven comparisons rather than developer preference debates.

The direct answer: GitHub Copilot excels at IDE integration and enterprise governance with 20M+ users and Fortune 100 adoption. Cursor leads in flow state maintenance and multi file editing for small-to-medium tasks. Claude Code dominates complex reasoning and architecture changes with its 1M token context window and 80.8% SWE-bench score.

By the end of this comparison, you will:

While these three tools boost individual productivity, measuring their actual impact on delivery speed and code quality requires dedicated engineering intelligence platforms that track AI-influenced outcomes across your entire codebase.

The 2026 landscape of ai coding tools has crystallized into three distinct approaches: IDE-integrated completion tools that augment familiar interfaces, AI-native editing environments that reimagine the development workflow entirely, and terminal-based autonomous agents that execute complex tasks independently. Understanding these categories is essential because each addresses different engineering bottlenecks.

IDE-integrated tools like GitHub Copilot work within your existing development environment. GitHub Copilot is an extension that works across multiple IDEs, providing the only tool among the three that supports a wide range of editors without requiring a switch. Developers keep their familiar interface, existing extensions, and muscle memory while gaining inline suggestions and chat capabilities. This approach minimizes change management friction and enables gradual adoption across teams using VS Code, JetBrains, or Neovim.

Standalone solutions like Cursor require switching development environments entirely. Cursor is a standalone IDE built as a VS Code fork with AI integrated into every workflow, making it a complete editor redesigned around AI-assisted development. As a vs code fork, Cursor maintains familiarity but demands that teams switch editors and migrate configurations. This tradeoff delivers deeper AI integration at the cost of adoption friction. Enterprise teams often find IDE-integrated approaches easier to roll out, while power users willing to embrace change may prefer the cohesion of AI-native environments.

Code completion tools focus on high-frequency, low-friction suggestions. You write code, and the ai generated code appears inline, accepted with a single keystroke. This approach optimizes for flow state and immediate productivity on the current file.

Autonomous coding through agent mode takes a fundamentally different approach. You describe a task in natural language descriptions, and the terminal agent executes multi step tasks across multiple files, potentially generating entire features or refactoring existing codebases. Claude Code is a terminal-based AI coding agent that autonomously writes, refactors, debugs, and deploys code, providing a unique approach compared to IDE-integrated tools. Claude Code leads this category, achieving higher solve rates on complex problems but requiring developers to adapt to conversational coding workflows.

The choice between approaches depends on your primary bottleneck. If developers spend most time on incremental coding, autocomplete delivers immediate time saved. If architectural changes, debugging intermittent issues, or navigating very large codebases consume significant cycles, autonomous agents provide greater leverage.

Building on these foundational distinctions, each tool demonstrates specific capabilities and measurable impact that matter for engineering teams evaluating options.

GitHub Copilot serves over 20 million developers and has become the Fortune 100 standard for ai assisted development. Its deep integration with the github ecosystem provides seamless workflow integration from code completion through pull request review.

Core strengths: Cross-IDE support spans visual studio, VS Code, JetBrains, Neovim, and CLI tools. Enterprise compliance features include SOC 2 certification, IP indemnification, and organizational policy controls. The Business tier ($19/user/month) provides admin controls and 300 premium requests monthly; Enterprise ($39/user/month) adds repository indexing, custom fine-tuned models (beta), and 1,000 premium requests.

Measurable impact: Best for enterprise teams needing consistent autocomplete across diverse development environments. Studies show inline suggestion acceptance rates of 35-40% without further editing. Agent mode and code review features enable multi file changes, though not as autonomously as Claude Code.

Key limitations: The context window presents the most significant constraint. While GPT-5.4 theoretically supports ~400,000 tokens, users report practical limits around 128-200K tokens with early summarization. For complex tasks spanning multiple files or requiring deep understanding of existing codebase, this limitation affects output quality.

Cursor positions itself as the ai coding tool for developers who want AI woven into every aspect of their workflow. Cursor is a standalone IDE built as a VS Code fork with AI integrated into every workflow, making it a complete editor redesigned around AI-assisted development. As a standalone ide based on a code fork of VS Code, it attracts over 1 million users seeking deeper integration than plugin-based approaches.

Core strengths: Composer mode enables multi file editing with context awareness across your entire project. Background cloud agents handle complex refactoring while you work on other tasks. Supermaven autocomplete achieves approximately 72% acceptance rates in benchmarks, significantly higher than alternatives for simple completions.

Measurable impact: Cursor completes SWE-bench tasks approximately 30% faster than Copilot for small-to-medium complexity work. First-pass correctness reaches ~73% overall, with ~42-45% of inline suggestions accepted without further editing. The tool excels at maintaining flow state, staying out of the way until needed.

Key limitations: Requires teams to switch editors, creating adoption friction. Token-based pricing through cursor pro can become unpredictable for heavy usage limits. On hard tasks, correctness drops to ~54% compared to Claude Code’s ~68%. The underlying model determines actual capabilities, making performance variable depending on configuration.

Claude Code operates as a terminal agent optimized for autonomous coding on complex tasks. Claude Code is a terminal-based AI coding agent that autonomously writes, refactors, debugs, and deploys code, providing a unique approach compared to IDE-integrated tools. Its 200K standard context window (up to 1M in enterprise/beta tiers) enables reasoning across entire codebases that would overwhelm other tools.

Core strengths: The largest context window available enables architectural changes, legacy system navigation, and debugging intermittent issues that require understanding thousands of files simultaneously. Agent teams enable parallel workflows. The 80.8% SWE-bench Verified score demonstrates superior performance on complex problems. VS Code and JetBrains extensions add claude code to existing workflows for those who prefer IDE integration.

Measurable impact: Claude code leads on first-pass correctness at ~78% overall, reaching ~68% on hard tasks versus Cursor’s ~54%. Pull request acceptance rates show 92.3% for documentation tasks and 72.6% for new features. Complex refactoring executes approximately 18% faster than Cursor.

Key limitations: Terminal-only primary interface requires learning curve for developers accustomed to IDE-centric workflows. Usage based pricing for extended context can become expensive for teams regularly using 1M-token sessions. Performance degrades around 147-150K tokens before auto-compaction triggers, requiring prompt engineering to manage context effectively.

Interpreting benchmark data requires understanding that synthetic benchmarks don’t directly translate to productivity gains in your specific codebase and workflow patterns.

SWE-bench Verified measures complex correctness on real-world code tasks. Claude Code (Opus 4.5) achieves ~80.9%, Cursor ~48%, and Copilot ~55% in comparable benchmark sets. These differences become more pronounced on hard tasks requiring multi step problems across multiple files.

HumanEval and MBPP test function-level code generation. Claude Opus 4.6 reaches ~65.4% on Terminal-Bench 2.0; Cursor’s newer Composer variants achieve ~61-62%. These benchmarks better predict inline suggestion quality than autonomous task completion.

Real-world accuracy patterns:

Interpretation guidance: Benchmark scores indicate ceiling performance under controlled conditions. Actual productivity impact depends on task distribution, codebase characteristics, and how well the tool matches your workflow patterns.

Synthesis:

Direct licensing costs:

Team cost scenarios:

Hidden costs matter:

Teams using cli tools extensively may find Claude Code’s terminal agent more accessible option despite the learning curve.

Developer resistance challenge: Teams using VS Code or JetBrains resist switching to Cursor’s standalone ide, even though it’s a vs code fork with a familiar interface. Exporting configurations, adjusting plugin sets, and changing muscle memory creates friction that individual developers often avoid.

Solution:

Code privacy challenge: All three tools process code through external ai models, raising IP protection concerns. Different tools offer different guarantees about data retention and model training.

Solution:

The brutal truth: These tools report adoption metrics—suggestions accepted, completions generated, features used—but none tell you their actual impact on your DORA metrics. License adoption doesn’t equal delivery speed improvement.

Solution:

Specific measurement approaches (pros and cons of relying on DORA alone):

Tool choice depends on team size, existing IDE preferences, and the complexity distribution of your codebase work. GitHub copilot vs cursor vs claude code isn’t a simple “best tool” question—it’s a workflow fit question requiring measurement to answer definitively.

The game changer isn’t choosing the right answer among these other tools—it’s implementing measurement infrastructure to track actual engineering impact rather than license deployment counts. Without that measurement, you’re guessing at ROI rather than proving it.

Related topics worth exploring: AI-assisted coding impact and best practices, engineering intelligence platforms for DORA metrics tracking, AI code review automation, and hybrid tool strategies for different tasks across your organization.

Which AI coding tool has the best ROI for engineering teams?

ROI depends on three factors: team size, codebase complexity, and measurement infrastructure. For enterprise teams prioritizing governance and minimal disruption, GitHub Copilot typically delivers fastest time-to-value. For teams doing heavy refactoring, Cursor’s multi-file capabilities justify the IDE migration cost. For complex architectures or legacy systems, Claude Code’s context window provides unique capabilities. Without measuring actual DORA metric impact, ROI claims remain speculative.

Can you use multiple AI coding tools together effectively?

Yes, hybrid approaches are increasingly common. Many teams use GitHub Copilot for daily inline suggestions, Cursor for complex refactoring sessions, and add claude code for architectural analysis or debugging multi step problems. The key is matching each tool to specific task types rather than forcing single-tool standardization, drawing on broader AI coding assistant evaluations and developer productivity tooling strategies.

How do you measure if AI coding tools are actually improving delivery speed?

Focus on DORA metrics: deployment frequency, lead time for changes, change failure rate, and mean time to recovery. Track these metrics before AI tool adoption, then measure changes over 30-90 day periods. Compare PR cycle times for AI-influenced commits versus non-AI commits. Engineering intelligence platforms like Typo provide this measurement across all three tools, and resources such as a downloadable DORA metrics guide can help structure your approach.

Which tool is best for teams using legacy codebases?

Claude Code’s 1M token context window makes it uniquely capable of reasoning across very large codebases without losing context. It can analyze entire codebases that would exceed other tools’ limits. For legacy systems requiring understanding of interconnected components across hundreds of files, this context advantage is significant.

What’s the difference between AI code completion and autonomous coding?

Code completion provides inline suggestions as you write code—high frequency, immediate, minimal disruption. Autonomous coding executes entire tasks from plain language descriptions, making multi file changes, generating api endpoints, or refactoring components. Completion optimizes flow state for solo developer work; autonomous agents leverage AI for complex tasks that would otherwise require hours of manual effort.

How do enterprise security requirements affect tool choice?

GitHub Copilot Enterprise offers the most comprehensive compliance features: SOC 2 certification, IP indemnification, organizational policy controls, and explicit guarantees about code not being used for model training. Cursor’s enterprise features are less publicly documented. Claude Enterprise offers compliance plans but terminal-based workflows may require additional security review. Response cancel respond policies and data retention terms vary by tier—evaluate enterprise agreements carefully.

PR cycle time measures the duration from pull request creation to merge into the main branch—and it’s the most actionable metric engineering leaders can move quickly. Code review cycle time, specifically, is the period from when a pull request is submitted until it is merged, serving as a critical indicator of development velocity and team collaboration efficiency. Elite teams achieve total cycle times under 24 hours, while median performers take 2-5 days. That gap represents days of delayed features, slower feedback loops, and compounding context switching costs across your entire development pipeline. High code review cycle times often indicate communication gaps, unclear requirements, or overburdened reviewers, while consistently low cycle times suggest efficient collaboration and well-defined review processes.

This guide covers how to measure PR cycle time components, break down the different phases of the cycle, interpret benchmarks for your team size, diagnose root causes of delays, and implement proven reduction strategies. As an essential part of DORA metrics for engineering performance, understanding PR cycle time is crucial for evaluating engineering team performance and efficiency and for appreciating why PR cycle time is often a better metric than velocity. The target audience is engineering managers, VPs of Engineering, and team leads managing 5-50 developers who want to accelerate their software development process without sacrificing code quality.

The short answer: Reduce PR cycle time through smaller PRs (< 200 lines), automated triage and reviewer assignment, clear code ownership, and AI-powered pre-screening that catches issues before human reviewers engage. Keeping pull requests small and manageable is key—research shows that PRs with over 200 changes often deter reviewers, while smaller PRs lead to quicker, more effective code reviews.

By the end of this guide, you will:

PR cycle time, often referred to as code review cycle time, is the total elapsed time from when a pull request is opened until it successfully merges into the main branch. This key metric measures the duration of the code review process and is central to DORA’s Lead Time for Changes—one of the four key metrics that distinguish elite engineering organizations from average performers. Understanding cycle time vs lead time within DORA metrics clarifies how PR cycle time fits into broader delivery performance. Code review cycle time can be broken down into different phases, such as initial development, waiting time, and review, to pinpoint where delays occur and optimize each segment for efficiency.

Understanding cycle time requires breaking it into these distinct phases, because the interventions for each are different. Tracking other pull request metrics—like PR Pickup Time and PR size—alongside PR review time and overall cycle time helps teams identify bottlenecks and target improvements more effectively. A team with high PR pickup time needs different solutions than one with slow merge times. High cycle time is often a sign of inefficiency and can indicate hidden problems within the workflow. Shorter PR cycle times usually indicate smoother workflows, while longer cycle times often signal hidden problems such as unclear ownership or overloaded reviewers.

PR pickup time measures the duration from PR creation until the first reviewer begins reviewing. This is the waiting period where new code sits idle, and it typically dominates overall cycle time.

In an analysis of 117,413 reviewed pull requests, median pickup time was approximately 0.6 hours—but the P90 (slowest 10%) reached 128.9 hours. That’s over five days of waiting before anyone even looks at the code change.

High PR pickup time correlates directly with reviewer availability, team awareness of pending reviews, and lack of automated assignment. When it’s unclear who should review a PR, developers passively wait for someone else to pick it up. Ensuring the team is promptly notified when a PR exists is essential to avoid unnecessary delays and keep the workflow moving.

Managing review requests and making sure PRs are reviewed in a timely manner is crucial for reducing pickup time. Dashboards that track pending review requests and highlight bottlenecks can help teams respond faster and improve overall PR cycle time.

Review time covers the active period from first review through final approval. This includes reading code, providing feedback, waiting for author responses, and iterating through review rounds. Code reviews are an essential process for maintaining code quality and delivery speed, but complex PRs can significantly increase review time due to the additional effort required to understand and assess them.

Key factors affecting review duration include pull request size, code complexity, and reviewer experience with the codebase. Large pull requests take exponentially longer—not just because there’s more code, but because reviewers defer them, requiring more context switching when they finally engage. Common causes of long PR cycle times include large pull requests, unclear ownership, and overloaded reviewers, which can create bottlenecks in the review process.

The tradeoff between review depth and speed is real. Teams must decide how much scrutiny different types of changes warrant. A one-line configuration fix shouldn’t require the same review process as complex changes to core business logic.

Merge time is the interval from final approval to actual merge into the main branch. This phase is often overlooked, but in the same GitHub dataset, P90 merge delay reached 19.6 hours.

Technical factors driving merge time include CI/CD pipeline duration, merge conflicts with other branches, required compliance checks, and branch policies that restrict merge windows. Teams with long-running test suites or manual deployment gates see this phase balloon.

Understanding each component matters because you can’t fix what you don’t measure. A team might assume review quality is the problem when actually their developers are waiting days for the first comment. The next section establishes benchmarks so you can identify where your team falls.

Industry benchmarks provide context for your team’s performance, but they require interpretation based on your specific situation. Metrics like code review cycle time and other pull request metrics—such as PR Pickup Time, overall cycle time, and PR size—are important benchmarks for assessing team performance and identifying bottlenecks in your workflow, especially when you follow the dos and don'ts of using DORA metrics effectively. A 24-hour cycle time means something different for a 5-person startup versus a 50-person team in regulated fintech. Frequent measurement of key performance indicators (KPIs) helps teams understand which strategies are effective in reducing PR cycle time and optimizing development velocity.

Based on aggregated data from DORA reports, Typo and CodePulse research, code review cycle time benchmarks break down as follows:

For teams of 5-50 engineers specifically: elite performers achieve under 12-24 hours total code review cycle time, with first review happening within four hours during business hours.

The median reviewed PR on public GitHub takes approximately 3 hours total—but P90 reaches 149 hours. That spread indicates most PRs move quickly, but a significant tail of delayed reviews drags down team velocity.

Effective measurement requires tracking each phase separately rather than just total duration. Breaking down the process into different phases enables more targeted improvements:

Tracking other pull request metrics such as PR Pickup Time and PR size alongside these phases provides additional insight into where delays or inefficiencies occur in the pull request process.

Consider business hours versus calendar time. An 18-hour cycle time that spans overnight isn’t the same as 18 hours during working hours. Some tools normalize for this; others require manual interpretation.

Typo surfaces real-time PR analytics that break down these components automatically, helping engineering leaders identify bottlenecks without manual data collection. The platform tracks cycle time trends across teams and repos, flagging when metrics drift outside acceptable ranges and making it easier to track and improve DORA metrics across your SDLC.

Benchmarks shift based on team composition and business context:

Small teams (5-10 engineers): Expect shorter cycle times due to higher code familiarity and simpler coordination. Target <4 hours for elite performance.

Medium teams (10-50 engineers): Coordination overhead increases. Target <24 hours for strong performance. Cross-team reviews and code ownership complexity require explicit processes.

Regulated industries: Compliance requirements, security reviews, and audit trails legitimately extend cycle time. Focus on reducing variance and eliminating unnecessary delays rather than hitting startup-speed benchmarks.

High-risk code changes: Critical paths warrant thorough review despite longer cycle times. The goal isn’t uniform speed—it’s appropriate speed for each type of change.

With benchmarks established, the next section covers specific interventions proven to reduce cycle time.

These strategies come from teams that have achieved measurable improvements—not theoretical best practices. Setting WIP limits and actively managing review PRs are proven methods to reduce PR cycle time, as they help prevent bottlenecks and maintain a steady workflow. Each intervention addresses specific phases of the PR cycle and includes implementation guidance. Effective PR teams can save up to 40% of their time by streamlining processes and eliminating bottlenecks through structured workflows.

Pull request size is the single strongest predictor of cycle time. Typo data shows small PRs get picked up 20× faster than large ones. The relationship is exponential, not linear. Complex PRs—those with many files changed or large code diffs—tend to slow down reviews, increase the risk of bugs, and create bottlenecks for both authors and reviewers.

Implementation steps:

For example, a team working on a major refactor initially submitted a single complex PR with over 1,000 lines changed. Reviewers hesitated to pick it up, and the PR sat idle for days. After splitting the work into five smaller PRs, each focused on a specific module, reviews were completed within hours, and feedback was more actionable.

Smaller PRs benefit everyone: authors get faster feedback, reviewers maintain focus without context switching overload, and the team catches issues earlier in the development process. Keeping pull requests small and manageable significantly enhances the likelihood of timely reviews, as large or complex PRs often deter reviewers and delay progress.

When it’s unclear who should review a PR, it sits in limbo. Automated assignment eliminates this ambiguity and ensures that every team member is promptly notified when a PR exists, reducing the risk of overlooked or stalled pull requests. Managing review requests effectively—by tracking pickup times and monitoring pending review requests—helps teams identify bottlenecks and maintain steady progress.

Implementation steps:

Clear expectations around response times eliminate ambiguity and ensure reviews and merges are completed in a timely manner, which is essential for maintaining workflow efficiency and reducing waiting.

Supporting practices:

Async norms work because they remove negotiation overhead. Reviewers know what’s expected; authors know when to escalate, helping the team consistently complete reviews and merges in a timely manner.

AI-powered pre-screening represents the largest recent advancement in reducing cycle time. These tools act as a first reviewer, catching issues before human reviewers engage and transforming how AI is used in the code review process.

Atlassian’s internal deployment of their AI code review agent reduced PR cycle time by approximately 45%. Their median time from open to merge had crept above 3 days, with pickup waits averaging 18 hours. After implementing AI pre-screening, the wait for first feedback dropped to effectively zero.

How AI code review helps:

Typo customers have seen substantial improvements: StackGen achieved 30% reduction in PR review time, and JemHR improved PR cycle time by 50%. These gains come from reducing review iterations—AI code reviews catch what would otherwise require human feedback rounds.

The balance between automation and human judgment matters. AI handles mechanical checks; humans focus on architecture, logic, and maintainability. This division makes both more effective.

Even teams committed to improvement hit obstacles. These are the most frequent bottlenecks and proven solutions. Setting WIP limits helps manage work-in-progress and prevent bottlenecks, while tracking other pull request metrics—such as PR Pickup Time, cycle time, and PR size—enables teams to monitor and optimize the entire pull request process. Additionally, mapping workflows visually, creating standard operating procedures (SOPs), and implementing a RACI matrix are effective strategies for improving PR processes and reducing cycle time.

Problem: Senior engineers become bottlenecks, reviewing most PRs while their queues grow.

Solution: Implement load balancing across team members. Cross-train developers on different code areas so multiple people can approve in each subsystem. Track review distribution metrics and adjust when imbalance appears.

Problem: PRs sit waiting because no one knows who should review them.

Solution: CODEOWNERS files combined with automated assignment rules. Define clear escalation paths for when owners are unavailable. Every directory should have at least two qualified reviewers.

Problem: Constant PR notifications interrupt deep work, leading developers to ignore them entirely.

Solution: Batch review sessions instead of interrupt-driven reviews. Configure intelligent notification filtering that surfaces urgent items while batching routine reviews. Some teams find dedicated “review o’clock” times effective.

Problem: Some changes genuinely can’t be decomposed easily, especially migrations or refactoring.

Solution: Establish different review processes for known-large changes. Use incremental migration strategies where possible. When large PRs are unavoidable, schedule dedicated review time with appropriate reviewers rather than expecting async turnaround.

Reducing PR cycle time requires a systematic approach across three dimensions: controlling PR size, automating triage and initial review, and establishing clear team processes. The teams seeing 30-50% improvements aren’t doing one thing differently—they’re applying multiple interventions that compound.

Immediate next steps:

Related areas to explore: Overall DORA metrics optimization connects PR cycle time to broader delivery performance, including CI/CD optimization using DORA metrics. Developer experience measurement helps identify whether cycle time improvements translate to actual productivity gains. Understanding how AI coding tools impact your metrics ensures you’re measuring what matters as development practices evolve.

See PR Analytics in Typo to track cycle time components and identify bottlenecks in real time. The platform surfaces where your team loses time across the entire code review process, enabling targeted interventions rather than guesswork.

What’s the difference between PR cycle time and lead time for changes?

PR cycle time measures from pull request creation to merge. DORA’s Lead Time for Changes spans from first commit to running in production—a broader measure that includes time before PR creation and deployment time after merge. PR cycle time is a subset of lead time and typically the most actionable component for engineering teams to improve when you are mastering the art of DORA metrics.

How do I convince my team to keep PRs smaller without sacrificing quality?

Frame it as reducing cognitive load, not cutting corners. Smaller PRs get faster, more thorough reviews because reviewers can actually focus. Share data: PRs under 200 lines get reviewed 20× faster. Start with guidelines rather than hard limits, and celebrate examples of good decomposition. Feature flags enable shipping incomplete features safely, removing the pressure to batch everything into large PRs.

Should we prioritize speed over thorough code review?

No—but the framing is misleading. Smaller PRs enable both speed and thoroughness. A reviewer spending 20 focused minutes on a 100-line PR catches more issues than spending 90 distracted minutes on a 500-line PR. Optimize for review quality per line of code, not absolute time spent. Reserve intensive review for high-risk changes; routine changes can move faster.

How does AI code review impact overall cycle time?

AI code review primarily reduces pickup time (providing instant first feedback) and review iterations (catching issues authors would otherwise need to fix after human review). Atlassian saw 45% cycle time reduction; Exceeds AI data shows PRs with AI assistance close in 2.1 days versus 4.2 days without. The tradeoff: some research indicates AI-assisted PRs may have higher defect density, so human review remains essential for complex changes.

What’s a realistic target for teams just starting to optimize PR cycle time?

Start with reducing time to first review by 25% and ensuring 80%+ of PRs stay under 200 lines. For a team currently at 3-5 day cycle times, target reaching <48 hours within a quarter. Elite performance (<12 hours) typically requires multiple optimization cycles. Focus on consistency before speed—reducing variance in your slowest PRs often matters more than improving your already-fast ones.

This article is for software developers, project managers, and technical leads who want to understand the SDLC coding phase to ensure efficient, high-quality software delivery. The SDLC coding phase is the stage in the Software Development Life Cycle (SDLC) where your project transitions from design documents to actual, working software. If you’re searching for information about the SDLC coding phase, this guide confirms the topic and provides a comprehensive overview of what happens during this critical stage, who is involved, and which best practices and tools are essential for success.

The SDLC coding phase is when developers convert software design into code, following best practices such as adhering to coding standards, using version control, conducting code reviews, writing clean and maintainable code, ensuring modularity for scalability, performing unit testing, documenting code, and leveraging CI/CD for automation.

The Software Development Life Cycle (SDLC) consists of seven essential phases: Planning, Requirements Analysis, Design, Coding, Testing, Deployment, and Maintenance. The SDLC is the backbone of modern software development, providing a structured approach for development teams to transform ideas into high quality software products. The SDLC outlines a series of well-defined phases—planning, requirements gathering, design, coding, testing, deployment, and maintenance—that guide the software development process from start to finish. By following the development life cycle SDLC, organizations can manage complexity, align with business objectives, and ensure that the final product meets user expectations.

A disciplined SDLC helps development teams minimize risks, control costs, and deliver reliable software that stands up to real-world demands. Whether you’re building a new SaaS platform or enhancing an existing system, a robust software development life cycle ensures that every stage of the development process is accounted for, resulting in software that is both functional and maintainable throughout its software development life.

With a clear understanding of the SDLC’s structure, let’s explore the different models used to implement these phases.

Selecting the right SDLC model is a critical decision that shapes the entire software development process. There are several popular SDLC models, each designed to address different project needs and team dynamics:

Choosing the right SDLC model depends on factors like project complexity, team size, stakeholder involvement, and the need for adaptability. For example, the agile model is often preferred for complex projects where requirements may evolve, while the Waterfall model can be effective for projects with stable, well-understood requirements. Understanding the strengths and limitations of different sdlc models helps teams select the right SDLC methodology for their unique context.

With an understanding of SDLC models, let's focus on the coding phase and its role in the software development process.

The Coding phase in the Software Development Life Cycle (SDLC) is when engineers and developers start converting the software design into tangible code.

The coding phase transforms design artifacts—architecture diagrams, API contracts, and database schemas—into working software components. This is the development stage where abstract concepts become executable code that users can interact with. At this stage, developers translate the system design into actual code, ensuring that the software functions as intended. The coding phase focuses on transforming key components of the system design into reliable, maintainable, and efficient working software.

Key activities during the SDLC coding phase include adhering to coding standards, utilizing version control, and conducting thorough AI-assisted code reviews to ensure quality.

Before writing code begins, the coding phase depends on validated requirements and approved designs. During the Coding phase, developers use an appropriate programming language to write the code, guided by the Software Design Document (SDD) and coding guidelines. Software developers need clear inputs: system architecture documentation, data flow diagrams, API specifications, and detailed component designs. Without these, teams risk building features that don’t match project requirements.

Once implementation wraps up, the coding phase feeds directly into the testing phase and deployment phase through:

The development phase serves dual purposes. It’s both a production step where software development teams write code and a critical feedback point. During implementation, developers often discover design gaps, requirement ambiguities, or technical constraints that weren’t visible during planning. This makes the coding phase essential for risk assessment and continuous improvement throughout all SDLC phases.

Now that we’ve defined the coding phase and its importance, let’s look at how to prepare for successful implementation.

Strong preparation during late design and early implementation reduces costly rework. For projects kicking off in Q1 2025, getting this foundation right determines whether your team delivers high quality software on schedule.

Before any developer opens their IDE, these artifacts must exist:

Development teams need alignment on how they’ll work together:

Before coding starts, every developer needs:

A team is “ready to code” when any developer can clone the repository, run the build, and execute tests within 30 minutes of setup.

With preparation complete, let’s examine the core activities that define the coding phase.

The coding phase isn’t just writing code—it’s a structured set of activities spanning design refinement to integration. Software engineering practices have evolved significantly, and modern coding involves collaboration, automation, and continuous validation.

Typical development process tasks include:

The organization of these tasks can vary depending on the software development model chosen. Different software development models, such as Waterfall, Agile, or DevOps, influence how the coding phase is structured, managed, and integrated with other SDLC stages.

A typical daily developer workflow looks like this:

How coding is organized depends on your software development methodology:

Large features must be decomposed into manageable pieces. A feature like “User account management” planned for a 2025 release breaks down into:

Each component becomes a user story with acceptance criteria and technical subtasks tracked in project management tools like Jira, Azure Boards, or Linear.

Estimation practices help teams plan sprints effectively:

Project managers use these estimates to balance workload across the team and ensure the project scope remains achievable within the timeline, feeding directly into effective sprint planning and successful sprint reviews in Agile teams.

The tech stack is typically established during the design phase, but concrete framework choices often get finalized during coding. Teams evaluate options based on:

These aren’t exhaustive catalogs—the right choice depends on your project requirements and team capabilities and should align with broader SDLC best practices for software development.

Modern applications follow layered architectures that separate concerns:

When implementing a use case like “customer places an order on 1 July 2025,” developers translate requirements into concrete code:

Throughout this process, it is essential to validate the software's functionality to ensure that the implemented features meet user needs and perform efficiently, supported by practices such as static code analysis for early defect detection.

Design patterns support clean implementation:

This separation of concerns creates loosely coupled architecture where changes in one layer don’t cascade unpredictably through the system.

With the core activities outlined, let’s look at the tools and environments that support efficient coding.